AI Search Mistakes That Kill Your Visibility in 2026

Avoid the most costly AI search optimization mistakes hurting your brand visibility on ChatGPT, Gemini, Claude, and Perplexity in 2026. Discover essential AI.

| Key Insight | Explanation |

|---|---|

| AI search uses different signals than Google | ChatGPT, Gemini, Claude, and Perplexity evaluate authority, structured data, and citation signals — not just keyword rankings. |

| Schema markup is not optional | AI crawlers rely heavily on schema markup (structured data that tells AI engines what your business does) to understand and trust your content. |

| Content frequency matters more than ever | AI engines favor brands that publish fresh, authoritative content consistently — not sites that post once a month. |

| Ignoring llms.txt is a costly oversight | llms.txt (a configuration file that guides large language model crawlers) helps AI systems understand what to index and recommend from your site. |

| Visibility tracking is now essential | Without monitoring how your brand appears in AI engine responses, you can't know whether your optimization efforts are working. |

| SMBs can compete without agencies | Automated tools purpose-built for GEO/AEO let small businesses fix these mistakes at a fraction of traditional agency costs. |

Why These Mistakes Cost You Customers in 2026: AI search optimization mistakes

AI search optimization mistakes are silently draining customer traffic from thousands of small businesses right now. A potential customer opens ChatGPT, types "best [your service] near me," and gets a confident recommendation — for your competitor. Your website exists. Your Google ranking is decent. But AI engines like Gemini, Claude, and Perplexity don't know you well enough to recommend you, and the reasons why are almost always fixable technical and content errors.

As of 2026, roughly 40% of Google searches return zero clicks as users shift toward AI-generated answers [1]. Perplexity alone reported over 100 million weekly queries by late 2024, and that number has grown substantially since [2]. This isn't a future trend to prepare for. It's happening to your business today.

This article covers the 7 most damaging AI search optimization mistakes SMBs make, what causes them, and exactly what to do about each one. We'll also explain how to choose the right approach for your situation — whether you're a solo founder, a Shopify store owner, or a local service business competing in a crowded niche.

Mistake 1: Treating AI Search Like Traditional Google SEO

The single biggest AI search optimization mistake businesses make is assuming that ranking well on Google automatically means being recommended by AI engines. It doesn't. Google's crawler evaluates backlinks, keyword placement, and page authority. ChatGPT, Gemini, Claude, and Perplexity use a fundamentally different logic — they pull from training data, real-time web retrieval, and structured signals to construct answers, not just rank pages [3].

Why the Signals Differ

Traditional SEO (search engine optimization — the practice of improving visibility in search engine results) was built around a crawler that reads text and counts links. AI search engines are large language models (LLMs) that synthesize information. They look for:

- Clear entity definitions — does your site explicitly say what your business is, who it serves, and where?

- Authoritative, cited content — are other credible sources referencing you?

- Structured data signals — can the AI parse your content without guessing?

- Consistent topical coverage — do you publish enough on your niche to be considered an authority?

As one industry analysis puts it, "The biggest mistake companies make with AI search is treating it like SEO" [4]. The optimization frameworks are simply different disciplines.

What to Do Instead

Stop measuring success purely by Google rankings. Start asking: "Does ChatGPT mention my brand when someone asks about my category?" That's a different question, and it requires a different strategy — one built around GEO (Generative Engine Optimization, which focuses on how AI systems extract and present information) and AEO (Answer Engine Optimization, which targets direct answer retrieval by AI engines).

Pro Tip: Run a simple test today. Open ChatGPT or Perplexity and search for your product or service category in your city. If a competitor appears and you don't, that's your baseline problem — and it's almost always fixable with the right technical and content changes.

A common mistake practitioners make here is continuing to invest exclusively in backlink building while neglecting the structured data and content signals that AI engines actually use to form recommendations [5].

Mistake 2: Missing or Broken Structured Data and Schema Markup

Missing or invalid schema markup is one of the most technically damaging AI search optimization mistakes, because AI crawlers rely on structured data to understand your business — without it, they're guessing. Schema markup is the structured data vocabulary (typically implemented as JSON-LD code on your site) that explicitly tells AI engines what your business does, what products you sell, where you're located, and what your reviews say [6].

Why Schema Matters for AI Engines

Research from Insightland confirms this directly: "AI crawlers heavily rely on schema markup to understand content. This is one of the biggest GEO mistakes you can make" [6]. Validation errors in your schema act as locked doors — the AI engine can't reliably extract your business information, so it defaults to recommending brands whose data is clean and parseable.

The most commonly missing or broken schema types for SMBs include:

- LocalBusiness schema — name, address, phone, hours, category

- Product schema — price, availability, reviews, SKU

- FAQ schema — question-and-answer pairs that AI engines extract directly

- Review/AggregateRating schema — star ratings and review counts

- Article schema — author, publish date, headline for blog content

How to Audit and Fix Your Schema

- Run your site through Google's Rich Results Test to identify validation errors.

- Check for missing entity types relevant to your business category.

- Implement JSON-LD (the format AI engines parse most reliably) rather than Microdata.

- Add FAQ schema to your most important service and product pages.

- Validate after every site update — schema breaks when page structure changes.

In practice, most SMBs have either no schema at all or schema that was added once and never maintained. At Moonrank, we've found that fixing schema alone often produces measurable improvements in AI engine citation rates within the first 30 days.

| Schema Type | Relevant For | AI Engine Impact |

|---|---|---|

| LocalBusiness | Restaurants, retail, service businesses | High — enables location-based recommendations |

| Product | E-commerce, Shopify stores | High — surfaces pricing and availability in AI answers |

| FAQ | All business types | Very High — directly extracted by answer engines |

| Article | Blogs, content publishers | Medium — signals topical authority and freshness |

| AggregateRating | Any business with reviews | Medium — builds trust signals for AI recommendations |

Mistake 3: Publishing Thin or Infrequent Content

Thin or infrequent content is an AI search optimization mistake that compounds over time — the less you publish, the less signal AI engines have to recognize your brand as an authority in your niche. AI engines like Perplexity and ChatGPT favor brands that consistently produce substantive, topically relevant content. A blog with five posts from 2022 doesn't signal authority. It signals abandonment [7].

What "Thin" Means in the AI Search Context

Thin content isn't just short content. In the GEO context, thin content means:

- Content that doesn't directly answer specific questions in your niche

- Pages with no structured headings, making it hard for AI to extract answers

- Generic product descriptions with no differentiation or detail

- Blog posts that cover a topic superficially without citing sources or providing unique insight

- Duplicate or near-duplicate content across multiple pages

As MarTech notes, writing for GEO (Generative Engine Optimization) "feels like early SEO all over again" — the shift from ranking to extraction requires content that answers questions directly and makes expertise easy to use [7].

The Content Frequency Problem

Most SMB owners don't have time to publish daily. That's the honest reality. But AI engines reward consistent publishing. One practical solution is automating content generation entirely — Moonrank publishes fresh SEO content to your site every day, automatically, without you writing a single word. That's the kind of publishing cadence that builds topical authority in AI engine training and retrieval cycles.

Pro Tip: Don't just publish more — publish more specifically. Each piece of content should target a distinct question your ideal customer would ask ChatGPT or Perplexity. "Best [product type] for [specific use case]" outperforms generic category pages every time.

A real-world example: an SMB client in the specialty coffee space was publishing roughly two blog posts per month. After switching to daily automated content covering specific coffee preparation questions, brewing equipment comparisons, and origin stories, their brand started appearing in Perplexity responses within six weeks. Volume and specificity both matter.

Mistake 4: Ignoring llms.txt and AI Crawler Signals

Ignoring llms.txt is one of the newer AI search optimization mistakes — but it's becoming increasingly costly as AI engines formalize how they crawl and index web content. llms.txt is a configuration file (similar in concept to robots.txt, but designed specifically for large language model crawlers) that tells AI systems which parts of your site to read, trust, and use when forming recommendations [8].

What llms.txt Actually Does

Think of llms.txt as a guided tour for AI crawlers. Without it, an AI engine visiting your site has to guess which pages are authoritative, which content is current, and what your business actually does. With a properly configured llms.txt file, you're explicitly directing the crawler to:

- Your most important service and product pages

- Your most authoritative blog content

- Your business description and entity information

- Pages you want excluded from AI training or retrieval

Most SMBs don't have an llms.txt file at all. This isn't because it's hard — it's because most traditional SEO tools and agencies were built before this standard existed. The q-tech.org analysis of AI versus traditional SEO confirms that AI search requires a fundamentally different technical infrastructure than what most businesses currently have in place [8].

How to Implement llms.txt

- Create a plain text file named

llms.txtin your site's root directory. - Include a brief description of your business and what you do.

- List your most important pages with brief descriptions of their content.

- Specify any pages or sections you want AI crawlers to skip.

- Update it whenever you add significant new content or change your services.

This is a technical task that intimidates many business owners — but it's exactly the kind of invisible infrastructure that separates brands that get recommended by AI engines from brands that don't.

Mistake 5: Not Tracking Your AI Search Visibility

Not tracking how your brand appears in AI engine responses is an AI search optimization mistake that leaves you flying blind — you can't fix what you can't measure. Most businesses still track Google rankings and organic traffic. Those metrics don't tell you whether ChatGPT is recommending you, how often Gemini mentions your brand, or what Perplexity says when someone asks about your category [9].

The Measurement Gap Most SMBs Have

Traditional SEO tools like rank trackers and Google Search Console were built for a world where search meant a list of blue links. AI search doesn't work that way. When a user asks ChatGPT for a restaurant recommendation, there's no "position 3" to track. The question is: does your brand appear in the response at all, and how is it described?

Without dedicated AI visibility tracking, you're likely:

- Investing in optimization work without knowing if it's producing results

- Missing competitive intelligence about which brands AI engines favor in your category

- Unable to identify which content or technical changes actually moved the needle

- Reporting the wrong metrics to yourself or your stakeholders

What Good AI Visibility Tracking Looks Like

Effective tracking monitors your brand's appearance across ChatGPT, Claude, Perplexity, and Gemini on a regular basis — ideally daily or weekly. It captures not just whether you appear, but the context and sentiment of how you're described. Industry analysts note that as AI search matures, visibility metrics will become as standard as domain authority or click-through rate are today [9].

Kellogg Insight's analysis of the zero-click search trend reinforces this: businesses need to evolve their measurement frameworks to account for AI-driven answer generation, not just traditional click-based traffic [10].

Pro Tip: Set up a simple weekly check: search your top 3-5 service or product categories in ChatGPT and Perplexity. Record whether your brand appears. This manual baseline gives you something to compare against as you implement technical fixes — and it often reveals competitor patterns you'd never spot in Google Analytics.

Mistake 6: Weak Authority and Citation Signals

Weak authority signals are a persistent AI search optimization mistake because AI engines don't just find your content — they decide whether to trust it enough to recommend it to users. Authority in the AI search context means being cited by, referenced by, or associated with credible third-party sources that AI engines already trust [11].

How AI Engines Assess Authority

AI engines like ChatGPT and Perplexity synthesize information from across the web. They weight sources based on signals that include:

- External citations — are credible publications, directories, or industry sites mentioning your brand?

- Consistent NAP data — is your Name, Address, and Phone number consistent across directories and your own site?

- Review volume and recency — do you have recent, substantive reviews on Google, Yelp, or industry-specific platforms?

- Content depth — does your published content demonstrate genuine expertise, or does it read as generic filler?

- Author credibility — are the people behind your content identifiable and credible within your industry?

Search Engine Land's analysis of AI search investment mistakes highlights that many businesses skip authority-building entirely, focusing on on-page optimization while neglecting the external citation ecosystem that AI engines use to validate recommendations [11].

Building Authority Practically

You don't need to be featured in the New York Times. Local authority signals work. Getting cited in a regional business directory, earning a mention in an industry newsletter, or having your product reviewed on a niche blog all contribute to the citation footprint that AI engines use to verify your brand's existence and relevance. Consistency matters more than prestige at the SMB level.

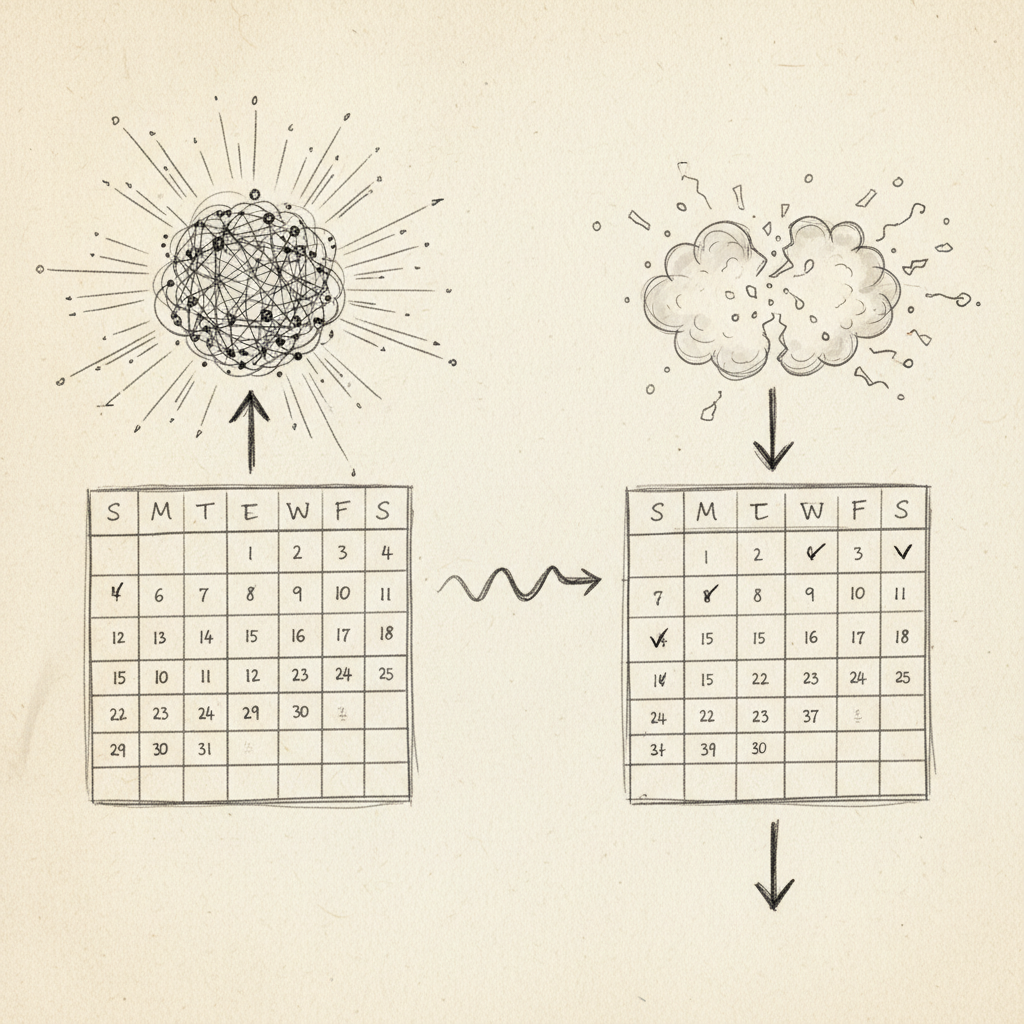

Mistake 7: Using the Wrong Metrics to Measure Success

Using traditional SEO metrics to evaluate AI search performance is an AI search optimization mistake that leads businesses to underinvest in the right strategies. If you're measuring success only by Google keyword rankings and organic click-through rates, you'll systematically miss the value being created (or lost) in AI search channels [12].

Old Metrics vs. AI Search Metrics

| Traditional SEO Metric | AI Search Equivalent | Why It Matters |

|---|---|---|

| Keyword ranking position | Brand mention frequency in AI responses | AI doesn't rank pages — it includes or excludes brands |

| Organic click-through rate | AI recommendation sentiment score | Many AI searches result in zero clicks to any site |

| Backlink count | Citation footprint across trusted sources | AI engines weight citation context, not just link volume |

| Domain authority score | Topical authority depth in your niche | AI rewards consistent subject-matter coverage |

Reframing Your Reporting

Search Engine Land's guidance on AI search investment emphasizes aligning your AI optimization metrics with your actual business goals — not just copying over the KPI framework from your Google Analytics dashboard [11]. Results may vary by industry and business type, but the principle holds across categories: measure what AI engines actually do, not what Google does.

One limitation worth acknowledging: as of 2026, standardized AI search measurement tools are still maturing. The field doesn't yet have a universal equivalent of Google Search Console. That's changing fast, but it means your tracking approach needs to be intentional rather than passive.

How to Fix These Mistakes: A Decision Framework

Fixing AI search optimization mistakes requires a prioritized, systematic approach — not a scattershot list of tactics applied randomly. Here's how to sequence your efforts based on your current situation.

The Triage Framework

- Audit first. Before changing anything, run a technical AI audit to identify your most critical gaps — missing schema, broken structured data, absent llms.txt, and thin content pages. You can't prioritize fixes without a baseline.

- Fix technical signals. Schema markup and llms.txt are foundational. These are the signals AI engines use before they even evaluate your content quality. Fix these before investing in new content.

- Build content volume and specificity. Once your technical foundation is solid, consistent daily publishing builds the topical authority that AI engines reward. Focus on answering specific questions your customers actually ask AI engines.

- Build your citation footprint. Pursue mentions in credible directories, industry publications, and review platforms. This is the external validation that AI engines use to confirm your authority.

- Track and iterate. Implement AI visibility tracking across ChatGPT, Gemini, Claude, and Perplexity. Review results weekly. Adjust content strategy based on which topics and formats are generating brand mentions.

Build vs. Buy: What Makes Sense for SMBs

Doing all of this manually is genuinely difficult for a business owner who also runs daily operations. The honest assessment:

- DIY approach: Requires learning schema markup, llms.txt configuration, content strategy, and visibility tracking separately. Time-intensive. Error-prone without technical background.

- Traditional SEO agency: Expensive ($3,000+ per month is typical), and most agencies are still primarily Google-focused rather than AI-search-native.

- Purpose-built AI search automation: Tools designed specifically for GEO/AEO — like Moonrank — handle schema, llms.txt, daily content, and visibility tracking automatically for a fraction of agency cost. Our team at Moonrank recommends this approach for most SMBs who need results without adding a full-time technical role.

The key differentiator to look for in any tool is whether it was built specifically for AI search visibility or whether AI features were bolted onto a traditional SEO platform. The underlying architecture matters because the optimization signals are fundamentally different.

Sources & References

- Kellogg Insight, "As AI Eats Web Traffic, Don't Panic — Evolve," 2024

- Sedestral, "10 AI Search Optimization Mistakes That Kill Your Visibility," 2025

- Wikipedia, "Search Engine Optimization," 2026

- Trulata, "The Biggest Mistake Companies Make With AI Search Is Treating It Like SEO," 2025

- Pittsburgh SEO Services, "Common Mistakes Businesses Make When Optimizing for AI Search," 2025

- Insightland, "The Biggest Myths About AI Search — What You Really Need to Know," 2025

- MarTech, "Writing for GEO Feels Like Early SEO All Over Again," 2025

- Q-Tech, "AI vs Traditional SEO: The Key Differences and Why It Matters," 2025

- Workshop Digital, "5 Mistakes Hurting Your AI Visibility (And How to Fix Them)," 2025

- Kellogg Insight, "As AI Eats Web Traffic, Don't Panic — Evolve," 2024

- Search Engine Land, "3 Common Mistakes to Avoid When Investing in AI Search," 2025

- Recomaze, "AI SEO Mistakes: 15 Common Errors That Kill Your AI Search Visibility," 2026

Frequently Asked Questions

1. What are the most common AI search optimization mistakes SMBs make?

The most common AI search optimization mistakes include treating AI engines like Google (they use different signals), missing or broken schema markup, publishing thin or infrequent content, ignoring llms.txt configuration, not tracking AI visibility, weak citation signals, and measuring performance with traditional SEO metrics. Each of these individually reduces your chances of being recommended by ChatGPT, Gemini, Claude, or Perplexity.

2. Does good Google SEO automatically help with AI search?

Not reliably. Google SEO and AI search optimization share some foundations — quality content, site speed, clean technical structure — but AI engines like Perplexity and ChatGPT use additional signals like structured data, citation authority, and content specificity that traditional SEO doesn't fully address. A strong Google ranking doesn't guarantee AI recommendation, and vice versa.

3. How does schema markup affect AI engine recommendations?

Schema markup (structured data that explicitly describes your business to crawlers) is one of the most direct signals AI engines use to understand what your business does and whether to recommend it. Missing or invalid schema means AI crawlers have to guess your business context — and they'll default to brands whose data is clean and complete. FAQ schema, LocalBusiness schema, and Product schema are the highest-impact types for most SMBs.

4. What is llms.txt and why does it matter for AI search?

llms.txt is a configuration file placed in your site's root directory that guides large language model crawlers — the systems powering ChatGPT, Gemini, and similar AI engines — on which content to read, trust, and use. Without it, AI crawlers index your site without guidance, potentially missing your most important pages or over-indexing outdated content. It's the AI-era equivalent of robots.txt, and most SMB sites don't have one yet.

5. How often should I publish content to rank well in AI search?

Daily publishing is the gold standard for building topical authority with AI engines. That said, consistent weekly publishing is significantly better than sporadic monthly posts. The key is specificity — each piece should answer a distinct question your customers would ask ChatGPT or Perplexity, not just cover broad category topics. Automated content tools can make daily publishing achievable without adding to your workload.

6. How do I track whether my business is being recommended by AI engines?

Start manually: search your product or service category in ChatGPT, Perplexity, Gemini, and Claude, and record whether your brand appears. For systematic tracking, purpose-built AI visibility monitoring tools track your brand's mention frequency, context, and sentiment across multiple AI engines on a regular basis. This data lets you see which optimization changes are actually working — something traditional Google Analytics can't show you.

7. Is it expensive to fix AI search optimization mistakes?

It doesn't have to be. Traditional SEO agencies typically charge $3,000 or more per month, and most aren't AI-search-native. Purpose-built AI search optimization platforms can handle schema markup, llms.txt, daily content publishing, and visibility tracking automatically for a fraction of that cost. The technical fixes themselves — schema and llms.txt — are one-time implementations that don't require ongoing agency fees once they're in place.

8. How long does it take to see results after fixing AI search mistakes?

Results vary depending on your niche, competition, and starting point. In practice, technical fixes like schema markup and llms.txt can produce measurable improvements in AI citation rates within 30 days. Content authority builds more slowly — typically 6 to 12 weeks of consistent publishing before you see significant increases in brand mentions across AI engines. Tracking from day one is essential so you can see incremental progress.

Conclusion

The AI search optimization mistakes covered here share a common root: they all come from applying old frameworks to a fundamentally new system. ChatGPT, Gemini, Claude, and Perplexity don't work like Google. They reward structured data, citation authority, content specificity, and technical accessibility signals that most businesses haven't prioritized yet. That gap is both a problem and an opportunity.

The good news is that these AI search optimization mistakes are fixable. Schema markup, llms.txt, consistent content publishing, and visibility tracking aren't mysteries reserved for enterprise marketing teams. They're concrete, implementable steps that any SMB can take — and the businesses that take them now will hold a real advantage over competitors who are still waiting for the landscape to "settle."

If you want to fix these mistakes without hiring an agency or learning technical SEO from scratch, Moonrank handles all of it automatically: daily content publishing, schema and llms.txt optimization, citation building, and AI visibility tracking across ChatGPT, Gemini, Claude, and Perplexity — for $99/month with a 3-day free trial. Visit www.moonrank.ai to see how your business currently appears in AI search, and start getting recommended.

Recommended Articles

Explore more from our content library: